Agenta vs diffray

Side-by-side comparison to help you choose the right product.

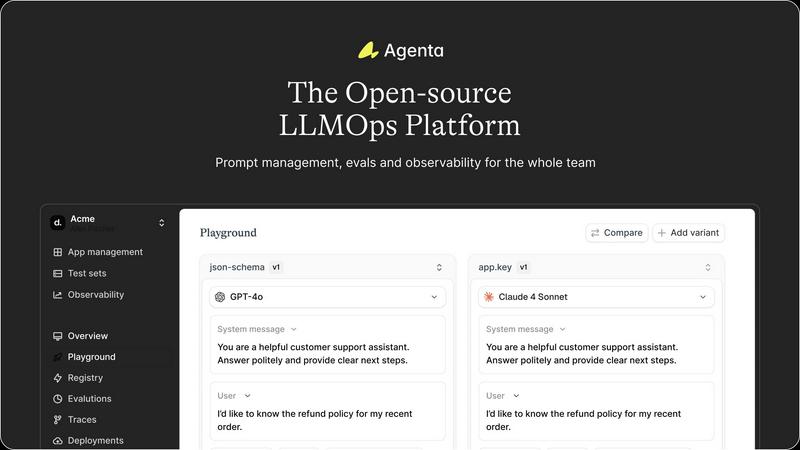

Agenta is an open-source LLMOps platform for centralized prompt management and evaluation.

Last updated: March 1, 2026

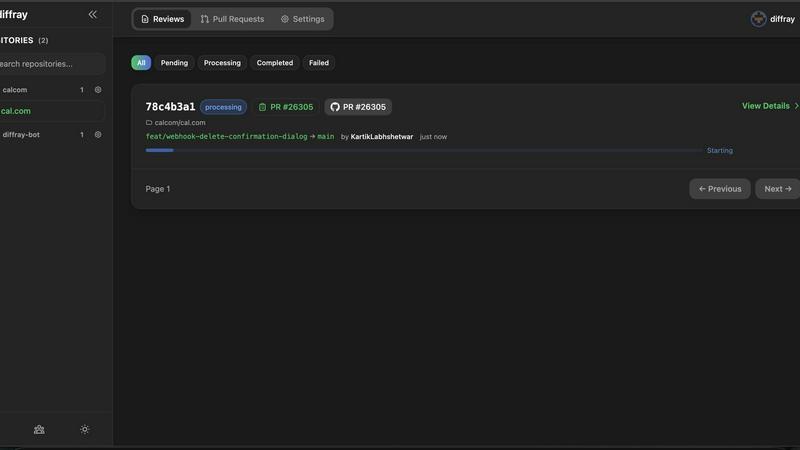

diffray

Diffray's AI code review identifies real bugs while minimizing false positives by 87%, ensuring efficient code quality.

Last updated: February 28, 2026

Visual Comparison

Agenta

diffray

Feature Comparison

Agenta

Unified Playground & Versioning

Agenta provides a centralized playground interface where developers and non-technical team members can experiment with different prompts, parameters, and foundation models from various providers side-by-side. Every iteration is automatically versioned, creating a complete audit trail of changes. This model-agnostic design prevents vendor lock-in and allows teams to compare OpenAI, Anthropic, open-source, and other models within the same experimentation environment, streamlining the prompt engineering process.

Automated & Integrated Evaluation Framework

This feature replaces guesswork with evidence-based development. Teams can create systematic evaluation workflows using LLM-as-a-judge, custom code evaluators, or built-in metrics. Crucially, Agenta allows for evaluation of full agentic traces, testing each intermediate reasoning step, not just the final output. This enables precise performance validation and comparison between different experiment versions, ensuring only improvements are promoted.

Production Observability & Debugging

Agenta offers comprehensive observability by tracing every LLM application request in production. Teams can monitor performance, detect regressions with live evaluations, and pinpoint the exact failure point in complex chains or agent workflows. Any problematic trace can be annotated collaboratively or instantly converted into a test case with one click, closing the feedback loop between production issues and development.

Collaborative Workflow for Cross-Functional Teams

Agenta breaks down silos by providing tools for every stakeholder. Domain experts get a safe UI to edit and test prompts without code. Product managers can run evaluations and compare experiments directly. Developers maintain full API control and parity with the UI. This brings PMs, experts, and engineers into a single integrated workflow for experimenting, versioning, and debugging with real data.

diffray

Specialized AI Agents

diffray employs a fleet of over 30 specialized AI agents, each focusing on a specific domain such as security, performance, or SEO. This specialization ensures a thorough and contextual review process that traditional tools cannot match.

Context-Aware Code Analysis

By analyzing the full context of a repository rather than just the immediate changes, diffray provides insights that are highly relevant and actionable. This leads to significant improvements in code quality and reduces the number of irrelevant comments.

Seamless Integration

diffray is designed for easy integration with popular development platforms like GitHub, GitLab, and Bitbucket, as well as on-premise setups. This ensures that teams can incorporate diffray into their existing workflows without disruption.

Reduced Review Time

With diffray, engineering teams can cut down their pull request review time from an average of 45 minutes to just 12 minutes per week. This efficiency turns what was once a chore into a streamlined process that enhances productivity.

Use Cases

Agenta

Streamlining Complex Agent Development

Teams building multi-step AI agents with frameworks like LangChain can use Agenta to manage the entire lifecycle. The unified playground allows for iterative prompt tuning for each step, while the full-trace evaluation capability is critical for validating the agent's reasoning process. Observability tools then help debug intricate failures in production, turning errors into actionable test cases.

Centralizing Enterprise Prompt Management

In large organizations where prompts are managed across different departments and tools, Agenta acts as the single source of truth. It centralizes all prompt versions, experiments, and evaluation results, enabling governance and collaboration. Non-technical domain experts can directly contribute to prompt optimization through the UI, accelerating iteration cycles without developer bottlenecks.

Implementing Rigorous LLM Evaluation Pipelines

For teams requiring robust validation before deployment, Agenta provides the infrastructure to build automated evaluation pipelines. Integrating human evaluators and LLM judges, teams can create a systematic process to score experiments against key performance indicators. This ensures every prompt or model change is backed by quantitative and qualitative evidence, reducing risk.

Enhancing Production LLM Application Reliability

Post-deployment, engineering and product teams use Agenta's observability suite to monitor application health and user interactions. Live evaluations detect performance drifts, while detailed traces allow for rapid root-cause analysis of issues. This continuous monitoring and feedback loop is essential for maintaining and improving the reliability of customer-facing AI features.

diffray

Enhancing Code Quality

Development teams can use diffray to enhance the overall quality of their code by identifying not just superficial style issues but also deeper, context-aware problems. This leads to cleaner, more maintainable codebases.

Accelerating Development Cycles

By reducing the time spent on code reviews, diffray enables teams to accelerate their development cycles. This allows for faster iteration and quicker deployment of features, improving responsiveness to market demands.

Increasing Team Collaboration

diffray fosters better collaboration among team members by providing actionable insights that can be discussed and resolved collectively. This promotes a culture of quality and continuous improvement within the team.

Streamlining Onboarding

New developers can get up to speed faster with diffray's contextual feedback and insights. By highlighting best practices and common pitfalls, diffray aids in the onboarding process, making it easier for new team members to integrate.

Overview

About Agenta

Agenta is an open-source LLMOps platform engineered to provide the essential infrastructure for AI development teams building applications with large language models (LLMs). It is designed for engineering teams, product managers, and domain experts who need to collaborate effectively to ship reliable, production-grade AI products. The core value proposition of Agenta is its integrated, model-agnostic approach that consolidates the fragmented LLM development lifecycle into a single, collaborative workflow. It directly addresses the common pain points of prompts scattered across communication tools, siloed teams, and a lack of systematic evaluation and observability. By offering a unified playground for experimentation, a robust framework for automated and human-in-the-loop evaluation, and comprehensive observability tools, Agenta enables teams to iterate with evidence, debug with precision, and validate every change before deployment. Its seamless compatibility with popular frameworks like LangChain and LlamaIndex, and any model provider, ensures it fits into existing tech stacks without vendor lock-in, making it a central hub for implementing LLMOps best practices.

About diffray

diffray is an advanced multi-agent AI code review platform designed to address the limitations of traditional single-model tools. It is specifically tailored for software development teams that require precision and context in their code reviews. Unlike generic AI reviewers that often overwhelm developers with irrelevant style suggestions while neglecting critical issues, diffray leverages a specialized fleet of over 30 AI agents. Each agent is an expert in a distinct area, including security vulnerabilities, performance optimizations, bug detection, framework-specific best practices, and even SEO considerations for web applications. This targeted approach enables diffray to conduct thorough and contextual reviews of code, understanding not only the changes proposed in pull requests but also the broader context of the entire repository. By doing so, diffray dramatically reduces false positives by 87% and triples the identification of actionable issues. With seamless integration capabilities for platforms like GitHub, GitLab, Bitbucket, and on-premise setups, diffray transforms code review processes, cutting review times from an average of 45 minutes down to just 12 minutes per week. It is engineered for professional development teams that prioritize actionable insights and contextual understanding over generic feedback.

Frequently Asked Questions

Agenta FAQ

Is Agenta compatible with my existing AI stack?

Yes, Agenta is designed for seamless integration. It is model-agnostic, working with OpenAI, Anthropic, Azure, open-source models, and more. It also integrates natively with popular LLM frameworks like LangChain and LlamaIndex, allowing you to incorporate its evaluation, versioning, and observability features without rewriting your application logic.

How does Agenta handle collaboration between technical and non-technical roles?

Agenta provides UI and API parity. Developers work via code and API, while product managers and domain experts can use the web interface to experiment with prompts, run evaluations, compare results, and annotate traces without writing a single line of code. This shared environment ensures everyone is aligned on the same data and experiments.

Can I evaluate complex multi-step AI agents, not just simple prompts?

Absolutely. A core strength of Agenta is its ability to evaluate full execution traces. For agents built with chains or sequential reasoning, you can evaluate and compare the output and logic at each intermediate step, not just the final answer. This provides deep insight into where an agent succeeds or fails during its reasoning process.

What does "open-source" mean for Agenta's deployment and pricing?

Agenta is a true open-source platform (Apache 2.0 license), meaning you can self-host the entire software on your own infrastructure for free, maintaining full control over your data and workflows. The company also offers a cloud-hosted enterprise version with additional features and support, providing flexibility based on your team's needs and scale.

diffray FAQ

How does diffray reduce false positives?

diffray reduces false positives by leveraging over 30 specialized AI agents that analyze code with context-awareness. This targeted approach allows for a deeper understanding of the codebase, leading to more accurate issue detection.

Can diffray be integrated with existing tools?

Yes, diffray seamlessly integrates with popular platforms such as GitHub, GitLab, and Bitbucket, as well as on-premise setups. This ensures minimal disruption to existing workflows while enhancing the code review process.

What types of issues can diffray detect?

diffray can detect a wide range of issues including security vulnerabilities, performance bottlenecks, bug patterns, and framework-specific best practices, as well as SEO considerations for web applications, providing a comprehensive review.

Is diffray suitable for small teams?

Absolutely. While diffray is designed for professional development teams, it is equally beneficial for small teams looking to improve code quality and efficiency. The insights provided can help any team regardless of size to maintain high standards in their codebase.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed to centralize prompt management, evaluation, and observability for AI development teams. It falls within the developer tools and MLOps categories, specifically targeting the workflow complexities of building reliable large language model applications. Users may explore alternatives for various reasons, including specific integration requirements with their existing tech stack, budget constraints that necessitate different pricing models, or the need for features that align with a different stage of their AI development lifecycle. Platform needs, such as deployment flexibility or team collaboration structures, also drive this evaluation. When selecting an alternative, key considerations should include the platform's compatibility with your current infrastructure and preferred LLM providers, the depth of its evaluation and observability tooling, and its approach to version control and collaboration. The ideal solution should seamlessly fit into your development pipeline, enhancing productivity without creating new silos.

diffray Alternatives

Diffray is a cutting-edge multi-agent AI code review platform designed to enhance the software development process by delivering precise, actionable insights. It belongs to the development tools category, focusing on improving code quality and reducing review times. Users often seek alternatives due to factors such as pricing, feature sets, and specific platform compatibility needs. This search typically stems from the desire for a solution that aligns better with their team's unique workflows and requirements. When searching for an alternative to diffray, it is essential to consider factors like the tool’s ability to integrate with existing systems, the level of accuracy in code analysis, and whether it offers specialized features that cater to your development stack. Additionally, evaluating the user experience and support services can significantly impact your decision, ensuring that the chosen tool meets your team's expectations and enhances productivity.