Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right product.

Hostim.dev deploys Docker and Compose apps with built-in databases on EU bare-metal for predictable pricing.

Last updated: March 1, 2026

OpenMark AI benchmarks over 100 LLMs on your tasks, delivering actionable insights on cost, speed, quality, and stability without any setup.

Last updated: March 26, 2026

Visual Comparison

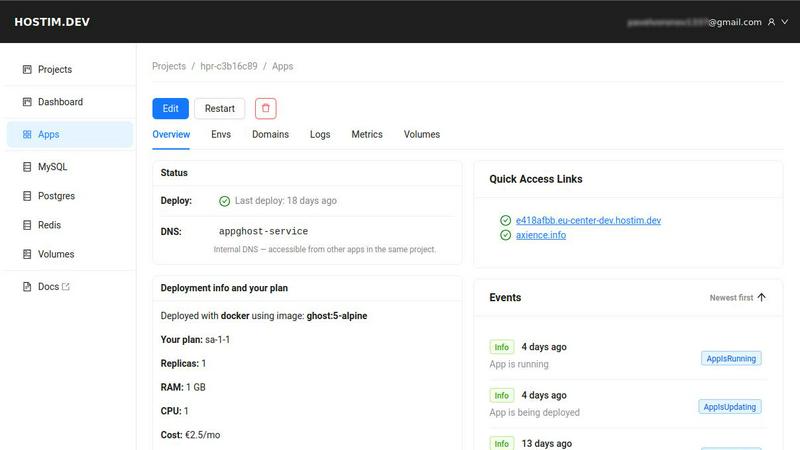

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Multi-Source Deployment (Docker, Git, Compose)

Hostim.dev offers unparalleled deployment flexibility, supporting multiple entry points for your application. You can launch directly from a public or private Docker image, connect a Git repository for automatic deployments on push, or paste an entire docker-compose.yml file to spin up multi-service applications instantly. This native compatibility with industry-standard container tooling ensures zero vendor lock-in and a seamless migration path for existing projects.

Built-In Managed Databases & Storage

The platform integrates fully-managed database instances and persistent volumes as first-class citizens. With one-click provisioning for PostgreSQL, MySQL, and Redis, these services are automatically configured and securely linked to your application containers. Connection credentials are injected via environment variables, removing the need for complex setup and ensuring your backend framework (like Django, Rails, or Spring Boot) connects immediately.

EU Bare-Metal Hosting with GDPR Compliance

All applications are hosted on dedicated bare-metal servers within German data centers, ensuring high performance and low latency for EU users. This architecture, combined with per-project Kubernetes namespace isolation, provides a secure foundation that is GDPR compliant by default. It offers a critical advantage for businesses handling European customer data, eliminating the legal and logistical complexities of data transfer outside the EU.

Transparent Per-Project Cost Tracking

Hostim.dev employs a simple, flat pricing model with costs broken down per project. Each project's resource consumption (CPU, RAM, and attached services) is tracked with clear, hourly billing. This provides developers and agencies with precise cost control, enabling clean handovers to clients with predictable, quotable hosting fees and eliminating the surprise bills common with elastic cloud pricing.

OpenMark AI

Task-Level Benchmarking

OpenMark AI allows users to benchmark tasks by simply describing them in plain language. This user-friendly approach enables seamless testing across various models without the need for complex configurations or coding.

Real-Time Model Comparison

The platform provides side-by-side comparisons of real API calls to models, ensuring that users receive authentic performance metrics rather than relying on cached marketing data. This transparency enhances decision-making confidence.

Cost and Latency Analysis

With OpenMark AI, users can analyze the cost per API call and latency for each model tested. This feature is crucial for understanding the financial implications of using different AI models in real-world applications.

Consistency Checks

OpenMark AI emphasizes the importance of output reliability. Users can assess model performance consistency by running the same task multiple times, allowing them to make informed choices based on stability and predictability.

Use Cases

Hostim.dev

Full-Stack Application Prototyping

Developers can rapidly prototype and iterate on full-stack applications by deploying a backend (e.g., FastAPI or Express.js) alongside a managed PostgreSQL database and Redis cache in a single action. The integrated stack and pre-wired connections allow teams to focus on building features rather than configuring infrastructure, dramatically accelerating the development cycle from idea to live demo.

Agency Client Project Hosting

Digital agencies can host each client's project in a completely isolated environment with dedicated resources and databases. The per-project billing model provides clear cost breakdowns for invoicing, while the simple deployment process and "clean handover" capability make it easy to transfer project ownership to the client post-launch, all on compliant EU infrastructure.

Microservices & Docker Compose Stacks

Teams running applications defined by Docker Compose can lift-and-shift their entire local development stack to production. Hostim.dev's native Docker Compose support means complex multi-service architectures, including web apps, workers, and internal tools, can be deployed without rewriting configurations for a proprietary platform, maintaining development/production parity.

Educational Projects & Portfolios

Students and developers building portfolio projects can deploy real, database-backed applications without cost complexity. The free trial and transparent pricing allow for hands-on learning with professional-grade tools (Docker, Git CI/CD, managed DBs), resulting in live projects that demonstrate practical DevOps and deployment skills to potential employers.

OpenMark AI

Model Selection for Development

OpenMark AI is ideal for developers who need to select the most suitable AI model for their applications. By benchmarking various models against specific tasks, teams can ensure they choose the best fit for their project requirements.

Pre-Deployment Validation

Product teams can use OpenMark AI to validate model performance before deploying AI features. This pre-deployment testing helps mitigate risks and ensures that the chosen model meets quality standards.

Cost Efficiency Analysis

Businesses can leverage OpenMark AI to analyze the cost efficiency of different models. By understanding the cost relative to output quality and latency, organizations can make informed decisions that optimize their AI investments.

Consistency in AI Outputs

For applications requiring consistent AI outputs, OpenMark AI allows users to verify model stability through repeated task runs. This is particularly useful in scenarios where reliability and accuracy are paramount.

Overview

About Hostim.dev

Hostim.dev is a developer-centric, bare-metal Platform-as-a-Service (PaaS) engineered to streamline the deployment and management of containerized applications. It eliminates the traditional DevOps overhead by providing a direct path from code to production. Developers can deploy directly from a Docker image, a Git repository, or a full Docker Compose file, launching a complete application stack in minutes. The platform is built with a full-stack mentality, integrating essential managed services like PostgreSQL, MySQL, and Redis directly into the workflow, pre-wired with environment variables for instant compatibility. Every project is securely isolated within its own Kubernetes namespace, ensuring data separation and inherent compliance with strict regulations like the GDPR, facilitated by its exclusive hosting on EU bare-metal infrastructure in Germany. With a transparent, per-project hourly billing model and cost tracking, Hostim.dev delivers predictable pricing. It is the optimal solution for freelancers, agencies, startups, and SaaS builders who prioritize a fast, integrated, and compliant deployment pipeline without the complexity and unpredictable costs of major cloud providers.

About OpenMark AI

OpenMark AI is an innovative web application designed for task-level benchmarking of large language models (LLMs). Built for developers and product teams, it allows users to efficiently assess which AI model best fits their specific needs. By simply describing the task in plain language, users can test and compare multiple models in a single session. The platform provides insights into cost per request, latency, scored quality, and output stability across repeated runs, enabling users to identify variance rather than relying on a single output. OpenMark AI facilitates the decision-making process before deploying AI features, ensuring that the selected model aligns with workflow requirements and budget constraints. With hosted benchmarking that eliminates the need for configuring separate API keys, teams can focus on what matters most—validating model performance. The application supports a diverse range of models and is ideal for those who prioritize cost efficiency relative to output quality, rather than merely the cheapest token pricing. Both free and paid plans are available to accommodate different user needs.

Frequently Asked Questions

Hostim.dev FAQ

Can I deploy with just a Docker Compose file?

Yes, absolutely. Hostim.dev provides native support for Docker Compose. You can directly paste your docker-compose.yml content into the platform's dashboard, and it will automatically provision and wire up all the services, networks, and volumes defined within it. This allows you to deploy complex, multi-container application stacks without any platform-specific configuration.

Where is my application hosted?

All applications on Hostim.dev are hosted exclusively on bare-metal servers in secure, enterprise-grade data centers located in Germany. This ensures your application and data reside within the European Union, providing inherent advantages for performance in the region and compliance with GDPR and other EU data protection regulations by default.

Do I need to know Kubernetes to use Hostim.dev?

No, you do not need any Kubernetes expertise. Hostim.dev abstracts away the underlying Kubernetes complexity through its simplified PaaS interface. You interact using familiar concepts like Docker images, Git repos, and Compose files. The platform manages all the Kubernetes orchestration, networking, and ingress control automatically behind the scenes.

Is this platform suitable for solo developers or only teams?

Hostim.dev is designed for both solo developers and teams. Its simplicity and fast deployment are perfect for individuals working on personal projects, startups, or freelance work. For teams and agencies, the per-project isolation, clear cost tracking, and easy handover features provide the structure needed to manage multiple client projects efficiently and securely.

OpenMark AI FAQ

How does OpenMark AI work?

OpenMark AI allows users to describe their tasks in plain language, testing these tasks across multiple models in a single session. It provides metrics on cost, latency, quality, and consistency to help users make informed decisions.

Do I need API keys to use OpenMark AI?

No, OpenMark AI is designed to streamline the benchmarking process. Users do not need to configure separate API keys for OpenAI, Anthropic, or Google, as the platform handles this for you.

What types of tasks can I benchmark?

OpenMark AI supports a wide range of tasks, including but not limited to classification, translation, data extraction, research, Q&A, and image analysis. This versatility makes it suitable for various applications.

Are there different pricing plans available?

Yes, OpenMark AI offers both free and paid plans to cater to different user needs. Details regarding these plans can be found in the in-app billing section when you sign up.

Alternatives

Hostim.dev Alternatives

Hostim.dev is a bare-metal Platform-as-a-Service (PaaS) that simplifies deploying containerized applications from Docker, Git, or Compose files. It falls into the developer-centric PaaS and container hosting category, designed to reduce DevOps complexity with built-in databases and predictable EU-based hosting. Developers often evaluate alternatives for various reasons. These can include specific regional hosting requirements, different pricing models like free tiers or usage-based billing, the need for integrated CI/CD pipelines, or access to a broader marketplace of pre-configured services and add-ons. When assessing other platforms, key considerations are your core tech stack compatibility, such as Docker and Git integration, the simplicity of the deployment workflow, and the transparency of costs. Security posture, data residency compliance, and the availability of managed databases and automatic TLS are also critical for a production-ready environment.

OpenMark AI Alternatives

OpenMark AI is a web-based application designed for task-level benchmarking of large language models (LLMs). By allowing users to test over 100 models based on specific parameters such as cost, speed, quality, and stability, it caters primarily to developers and product teams who need to validate model performance before deploying AI features. Users often seek alternatives to OpenMark AI for various reasons, including pricing structures, specific feature requirements, and compatibility with different platforms or workflows. When selecting an alternative, it is crucial to consider factors like the breadth of model support, the ease of integration into existing systems, and the clarity of performance metrics provided to ensure effective and efficient benchmarking.