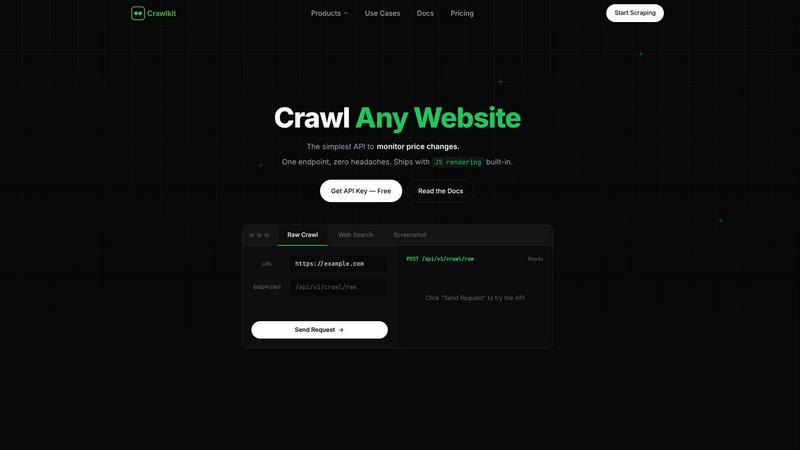

Crawlkit

CrawlKit is a versatile API that allows developers to easily scrape and extract structured data from any website.

Visit

About Crawlkit

CrawlKit is an advanced web data extraction platform designed specifically for developers and data teams who require dependable and consistent access to web data without the overhead of managing scraping infrastructures. It effectively addresses the complexities associated with modern web scraping, such as dealing with rotating proxies, implementing headless browsers, navigating anti-bot protections, and managing rate limits. With CrawlKit, users can issue a straightforward API request, and the platform handles all backend processes, including proxy rotation, browser rendering, retries, and evading blocking mechanisms. This allows teams to focus on analyzing and utilizing the extracted data instead of getting bogged down in the collection process. Whether you are interested in raw HTML, search results, visual snapshots, or professional data from platforms like LinkedIn, CrawlKit offers a unified and efficient interface tailored to meet diverse data extraction needs. Its integration capabilities with popular tech stacks and tools like AWS, Node.js, and Puppeteer make it a versatile choice for any data-driven project.

Features of Crawlkit

Simplified API Access

CrawlKit provides a straightforward HTTP API that allows users to extract structured data from various platforms and websites with a single request. This eliminates the need for complex configurations and enables easy integration with any programming language or automation tool, making it accessible for developers of all skill levels.

Comprehensive Data Extraction

With CrawlKit, users can gather a wide array of data types from platforms such as LinkedIn, Instagram, and app stores. From company profiles and job postings to user engagement metrics and app reviews, the platform offers end-to-end data extraction capabilities tailored to cater to specific data needs across diverse industries.

Robust Proxy Management

One of the standout features of CrawlKit is its built-in proxy rotation and management capabilities. This feature ensures that users can bypass anti-bot mechanisms effectively, allowing for uninterrupted data collection while adhering to the terms of service of various platforms, which often impose strict data scraping limitations.

Real-time Data Validation

CrawlKit guarantees that users receive complete and accurate data by waiting for full page loads and validating responses. This means that users do not have to deal with partial or broken outputs, thereby enhancing the reliability of the data collected and streamlining the subsequent analysis process.

Use Cases of Crawlkit

CRM Enrichment

CrawlKit can be utilized to automatically enrich customer relationship management (CRM) systems with valuable LinkedIn profile data. Users can pull essential information such as job titles, company details, and contact information for each lead, significantly improving lead generation processes and sales strategies.

Competitive Intelligence

Businesses can leverage CrawlKit to gather insights on competitors by monitoring their online presence across social platforms like Instagram. By tracking follower counts, engagement rates, and content performance, organizations can make informed decisions to enhance their competitive positioning.

Market Research

Researchers can utilize CrawlKit to extract comprehensive data from app stores, enabling them to analyze app reviews, ratings, and trends. This data can inform product development and marketing strategies, helping businesses understand user preferences and market dynamics.

SEO and Content Strategy

CrawlKit can aid digital marketers in extracting search results and SERP data for SEO analysis. By obtaining clean, parsed results filtered by language, region, and time range, marketers can refine their strategies, ensuring that content is optimized for search engines and aligned with user interests.

Frequently Asked Questions

How does Crawlkit handle anti-bot protections?

CrawlKit employs advanced techniques such as proxy rotation and headless browser rendering to effectively navigate anti-bot protections on websites. This ensures that users can extract data without being blocked or restricted.

Is there a limit to the number of requests I can make?

CrawlKit offers a flexible credit-based pricing model with no monthly commitments or rate limits. Users can start with 100 free credits and purchase additional credits as needed, allowing for scalable data extraction without restrictions.

What programming languages can I use with Crawlkit?

CrawlKit is designed to work seamlessly with any programming language or automation tool. Its simple HTTP API allows integration with popular languages such as Node.js, Python, Java, and more, making it versatile for a wide range of applications.

Can I get support if I encounter issues?

Yes, CrawlKit provides priority support to its users. Whether you have technical questions or need assistance with integration, the support team is available to help resolve issues promptly and efficiently.

Explore more in this category:

Top Alternatives to Crawlkit

TubeAnalytics

TubeAnalytics is a powerful YouTube analytics platform built for serious creators who want clarity for growth.

Fusedash

Fusedash transforms raw data into interactive dashboards and charts, enabling teams to act on insights instantly.

Linkfinder AI

LinkFinder AI enriches your data instantly with accurate company details from multiple trusted sources.

BlitzAPI

BlitzAPI provides scalable B2B data through powerful APIs, empowering your growth team's strategies with validated.

echoloc

Echoloc transforms job posts into actionable insights, revealing buying signals for sales teams to target potential.

FilexHost

Effortlessly host and share any file with instant URLs using FilexHost's simple drag-and-drop interface.